All articles

How Enterprises Strengthen AI Vendor Partnerships with Carefully Crafted Oversight Controls

Shipra, Lead Engineer DevOps at United Airlines, details how enterprises embed oversight into AI platforms to scale autonomy without surrendering accountability.

I’m comfortable outsourcing tooling, but not accountability, identity control, or governance. If AI is acting inside our environment, we must retain visibility and the ability to intervene instantly.

Buying an AI platform is not the same as achieving responsible AI adoption. As agentic systems move deeper into enterprise and customer-facing workflows, the question shifts from feature sets to control: what responsibilities remain non-transferable, no matter how sophisticated the vendor? Governance, accountability, identity control, and the authority to intervene cannot be outsourced. When AI acts inside live business environments, internal ownership must stay intact, and the strongest vendor partnerships are designed around that boundary from the start.

Shipra is a Lead Engineer, DevOps at United Airlines, where she designs automation-first foundations for enterprise-scale systems. With previous roles at NielsenIQ, IBM, and Microland focused on cloud infrastructure, identity automation, and network architecture, she brings deep experience in building governance into the infrastructure layer. That foundation shapes her view that AI security requires the same discipline: ownership and accountability cannot be engineered or delegated outside of the organization.

"I’m comfortable outsourcing tooling, but not accountability, identity control, or governance. If AI is acting inside our environment, we must retain visibility and the ability to intervene instantly," says Shipra. For security teams navigating AI adoption, that distinction demands a fundamental reframing of ownership, one that the most responsibly built AI platforms are beginning to design around. Buying tools is not the hard part. The harder question is what happens when those tools act autonomously inside an environment, and who is accountable when something goes wrong.

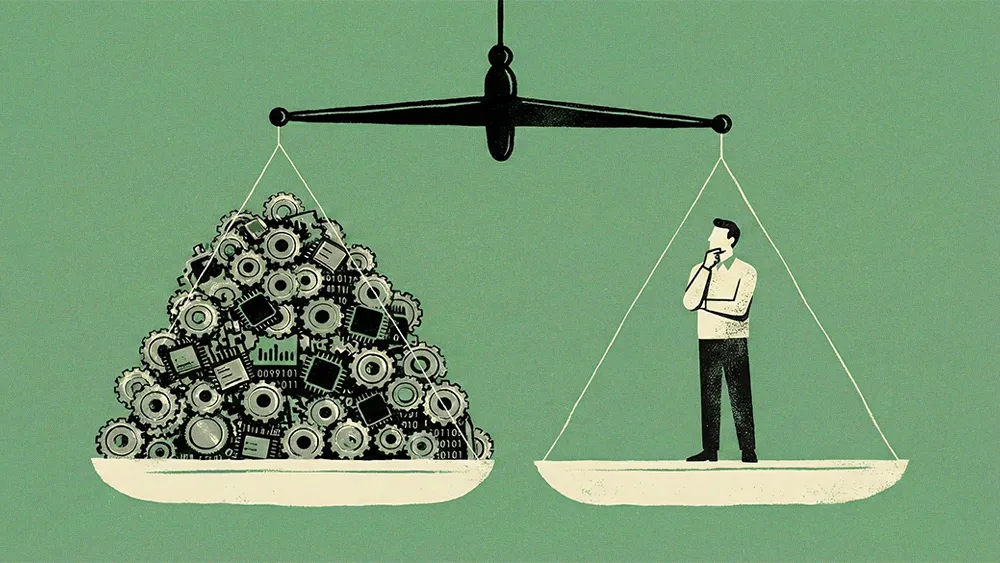

That principle is foundational because no external platform, no matter how advanced, carries the full business context required for high-stakes decisions. As AI expands across operational and customer-facing workflows, only internal teams fully understand the business logic behind a signal, recommendation, or anomaly, and whether it’s routine, risky, or revenue-impacting. Responsible vendors don’t attempt to replace that judgment. They design systems that preserve visibility, enable intervention, and reinforce shared accountability, recognizing that context and ultimate responsibility remain inside the organization.

Context is king: "Internal context becomes the differentiator when security decisions depend on business logic and operational nuance. External tools can detect patterns, but only internal teams truly understand impact," says Shipra. The distinction matters most when the stakes are highest, when an anomaly's true significance depends not on pattern recognition but on knowing what the business stands to lose.

New dashboard, same problem: The real test for new agentic capabilities is whether they reduce the cognitive load on human analysts, rather than simply shifting review efforts from an alert queue to a different AI dashboard. "Automation lowers cost when it increases signal clarity. If it simply shifts review effort from alerts to AI outputs, then we haven’t reduced risk. We’ve redistributed it," she notes. It’s a lesson that reflects growing government initiatives to manage AI risk and the rise of new state-level oversight bodies.

The implication is that effective AI integration demands structural change, not just new tooling. Organizations must rethink how oversight, validation, and accountability are embedded into workflows, an evolution that’s becoming increasingly necessary as AI systems take on more autonomy and operate closer to revenue and customer-critical processes.

Down to the studs: Effective AI integration requires structural alignment, not incremental add-ons. Organizations must realign oversight, validation, and accountability to match the speed and impact of those decisions. As Shipra puts it, "Embedding AI means rethinking how data flows, how decisions are validated, and how accountability is tracked. It’s not a feature. It’s an architectural shift. Using AI tools is just plugging something into the SOC, but embedding AI means redesigning the workflows and data pipelines around it,"

Trust but verify: Under this model, autonomy is earned and scaled gradually, a philosophy that aligns with emerging international codes of practice for AI. It's a standard that forward-thinking platforms are beginning to operationalize, with automated agent testing frameworks that validate AI behavior before it ever reaches a customer." Autonomy should scale gradually. AI can recommend broadly, but enforce narrowly. High-impact actions must always require human validation until confidence and auditability are proven," she says.

The goal of this framework, she says, is to redefine and elevate the role of the human analyst. In a hybrid human-AI model, the machine is optimized for processing massive volumes of data: the alerts, pattern matching, and routine triage. The premium shifts to what machines cannot replicate, freeing up the human partner to do what they do best: exercise judgment and provide the final layer of accountability. "The best analysts won't be the fastest clickers. They'll be the strongest critical thinkers. AI handles volume. Humans handle judgment," Shipra concludes.