All articles

Contact Centers are Overcoming the AI Deflection Trap by Balancing Automation with Human Support

Tarah Nelson, Head of CX at Rosabella, explains why AI dashboards can hide real customer frustration and how smarter automation design keeps both agents and customers on track.

Key Points

Enterprise AI often looks successful on dashboards but traps customers in frustrating chatbot loops, hiding dissatisfaction and pushing burned-out agents to handle only the hardest interactions.

Tarah Nelson, Head of CX at Rosabella, says teams must look beyond response times and contact volumes by reviewing real conversations and understanding how automation changes both customer and agent experiences.

Nelson says leaders get better results when they clean up processes first, route high-stakes issues to humans, and use AI selectively instead of trying to automate every interaction.

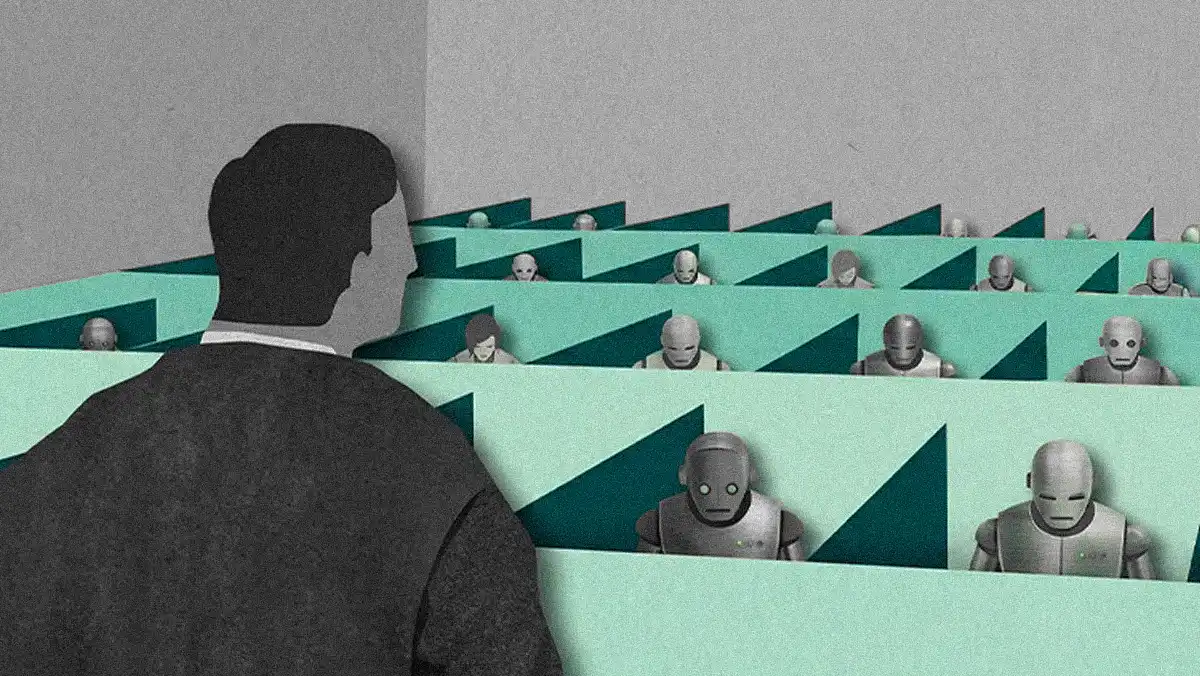

If you deflect all the easy conversations to AI, your agents end up dealing with frustrated customers all day long. That leads to burnout, higher attrition, and ultimately more cost.

Enterprise AI promises quick efficiency gains in the contact center, and the dashboards usually deliver. Response times drop, contact volumes shrink, and the rollout looks like a clear win. Yet under the surface, customers often experience something very different in the form of automated chatbot loops that feel suspiciously familiar to the frustrating IVR systems companies spent years trying to escape. The gap between metrics and lived customer experience is forcing leaders to rethink how automation should actually support human service.

Tarah Nelson, Head of CX for the wellness brand Rosabella, is an expert at bridging the gap between CFOs looking for efficiency and the operational reality of the contact center floor. Drawing on nearly a decade of experience leading high-impact CX teams, she knows firsthand what happens when the C-suite treats AI as a turnkey cost cutter. Instead of seamless efficiency, she often sees short-term deflection gains showing up right beside rising customer frustration and employee burnout.

"If you deflect all the easy conversations to AI, your agents end up dealing with frustrated customers all day long. That leads to burnout, higher attrition, and ultimately more cost than the automation was supposed to save." She explains that positive movement in metrics like response times and volumes can mask negatives that lie deeper in the customer and agent experience.

Seeing red: Nelson says the disconnect often stems from the way leaders evaluate success. "We call it the watermelon effect. Your dashboards are all green on the surface, but underneath, your customers are just angry. They're all red-faced," she says. A heavy focus on vanity metrics creates a false sense of security, even as pockets of dissatisfaction show up as negative third-party reviews and social complaints.

Read the transcripts: To fix the blind spot, Nelson notes that the best teams pair quantitative dashboards with qualitative reviews of actual conversations. "Don't look at just what your dashboards are telling you. Review the conversations. Review the tickets with the bot versus the ones with your agents. Figure out who's more escalated and why."

The tech is only half the story. Automation amplifies the processes it's built on, meaning a bot deployed over a messy knowledge base simply scales the existing dysfunction. If an automated system blindly sends return instructions to a customer complaining about a botched order, for example, that usually reflects unresolved policy questions or inconsistent playbooks on the floor. Nelson says leaders can't assume AI is plug-and-play. "Maybe you have processes you haven't cleaned up. Maybe you have an old SOP from here, but your team is using an SOP from another system. Maybe your reps are using one macro, but you've added a new one since then. You have to look at all of the pieces and how they fit together in the puzzle." She says before flipping the switch, operators have to do the unglamorous work of cleaning house and identifying which tasks to automate versus escalate.

Sympathy doesn't scale: Customer expectations add another layer of friction. Consumers want fast service, but when they're upset, they actively try to bypass automation entirely, highlighting the importance of readily available human agents. "AI will never be able to replicate sympathy," Nelson asserts. "It may come across as that, but people almost immediately know when they're talking to a robot versus when they're talking to a live human."

Press 0 for PTSD: The resulting friction highlights the gap between AI expectations and current operational realities. For a stressed customer, encountering a rigid chatbot triggers memories of endless phone menus, prompting them to repeatedly hit '0' to find a human. "It's like IVR gave us PTSD," she muses. "People are looking at AI and assuming it is a bot that will do the exact same thing that phone number from 1996 did, where they have to press numbers over and over to get help." According to Nelson, designing an effective routing strategy requires building around that psychological baseline.

Behind the customer-facing experience sits a predictable operational bottleneck for the human workforce. Some organizations aim to deflect nearly all simple queries to bots, assuming the math works out in their favor. But when bots skim all the easy wins, the work that reaches human queues can quickly become disproportionately high-stakes. Human agents are left in a constant, unbroken state of emotional combat. To prevent turnover, Nelson suggests the counter-intuitive fix of intentionally preserving some easy tickets for human agents. "I would rather see them take care of a routine where-is-my-order question and know that they're getting that two-to-five-minute break instead of facing unhappy customers repeatedly throughout the day to the point that they no longer want the job," she says. By using workflows and unified command centers for human and AI agents, leaders can route a portion of simple inquiries to agents just to give them a mental reset.

Truth in the numbers: Replacing burnt-out agents carries steep costs, requiring ongoing recruiting and training as headcount fluctuates. Those downstream costs can change how AI investments present to finance leaders. Short-term savings from cutting payroll look great when dashboards emphasize deflection, but Nelson emphasizes that a complete financial model accounts for the compounding costs of replacing employees and the revenue hit of losing long-term customers. "Attrition could skyrocket. Churn could skyrocket. Sure, I may be saving $80,000 in payroll costs, but what about the $1.2 million that I'm going to lose because we've churned those customers?"

To navigate the split, Nelson advises teams to consider two dimensions when deciding what to automate. "Weigh the impact on the customer and the business risk. If both are high, that interaction should go to a human," she advises. In practice, this approach requires an adaptive analysis rather than a rigid rulebook. "How is the customer going to feel on the end of that conversation? If an upset customer calls, sure, I can push it to a robot. But what happens when I push it? Are they going to cancel their subscription? Are they going to return their order? Tell all their friends, post on social media, take it to the BBB? You need to weigh the business impact of it and the customer impact of it."