All articles

One Product Leader Warns That Plummeting Support Volume Is Often A Wall, Not A Win

Mukta Dhanuka, a Product Lead with two decades of experience, argues that companies celebrating dramatic drops in support volume after AI deployment are often mistaking suppression for success, and that the fix is building systems for deep listening.

Make CX Current News one of your go-to sources on Google

If your support issues have gone down drastically over a few months or even a year, especially in a single category, that alone shouldn't be celebrated without understanding why. You've potentially just created a wall for people to reach you.

When a company deploys an AI support system and response times and SLA attainment improve by 90% while ticket volume drops around 60%, the leadership team usually celebrates. The cost of operations falls. The efficiency metrics improve. Bonuses follow. But the improvement rarely means the company has solved the majority of its problems. More often, it means customers gave up trying to reach a human.

Mukta Dhanuka is a product leader with experience across multiple technology companies — SAP, Square, and Meta — including board and advisory roles built over two decades of building and scaling products. Her perspective spans B2B, B2C, and B2B2C at every stage of product and organizational maturity.

"If your support issues have gone down drastically over a few months or even a year, especially in a single category, that alone shouldn't be celebrated without understanding why," says Dhanuka. "There is a high likelihood that you haven't solved all the problems. You've potentially just created a wall for people to reach you."

The problem, she explains, runs across companies of all sizes and geographies. The drivers vary, but they tend to cluster around a few recurring failures: technical illiteracy as teams rush to adopt fast-moving technology, metric engineering tied to bonuses and short-term contracts, fragmented process ownership where no single person understands the full customer journey, and a lack of realistic executive performance targets that prevent the slow erosion of brand value and customer loyalty.

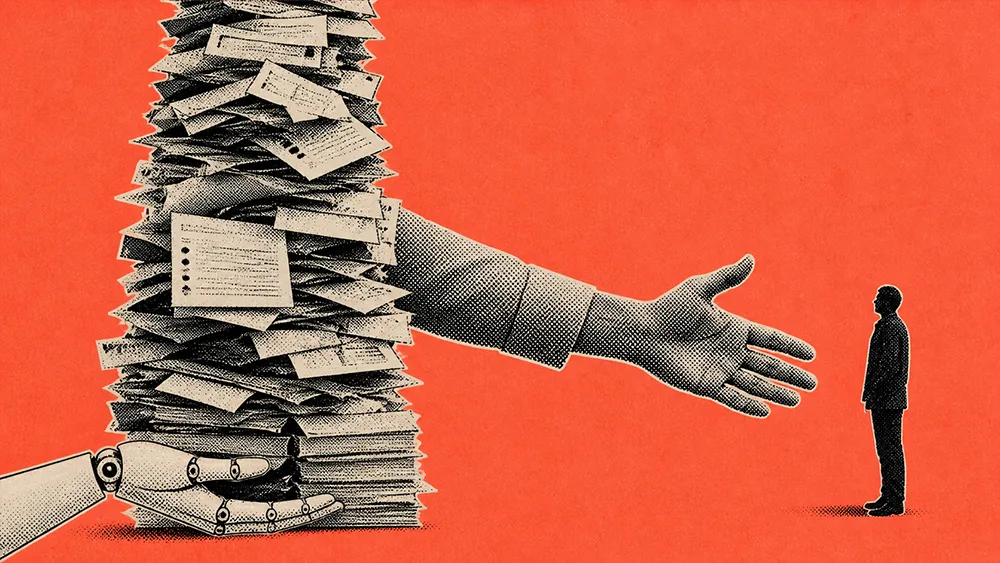

The incentive trap: The dynamic is sharper in companies run by hired executives versus founders. "Their short-term contractual gains are often dependent on short-term margins. They're not going to be around in ten years," Dhanuka says. When leadership sets targets to reduce cost of operations by 80 or 90 percent without having fixed the underlying product or service issues, "they're just shoving them under the rug. It will show up in a different way." The deeper issue, she adds, is that leaders are often incentivized to make things look green. Only distributed ownership, with clear accountability and metrics that ladder up, actually drives consistency in ground-level execution.

Lagging signals: The consequences of suppressed feedback rarely appear immediately. Brand sentiment erodes slowly — or, increasingly, overnight via a single viral post. Customer acquisition costs rise. Sales cycles lengthen. Lifetime value declines. "Most likely it will be a lagging indicator versus a leading indicator," Dhanuka explains. By the time boards see the numbers move, the damage has been compounding for months.

The leading indicator: There is one early warning sign worth watching. "If your support issues have gone down drastically but you haven't really done the work to improve your product or service, then you have your answer," she says. Often, she adds, the deeper issue is that senior leadership is structurally disconnected from ground-level support and success signals; the metrics look fine at the top precisely because no one is translating what's happening on the front line. Dhanuka recalls advising a customer during her time building cloud products: when a decline in customer messages surfaced, the team flagged that users were likely disengaging entirely, not becoming happier. A check of the portal confirmed it: fewer sales orders, fewer service requests, fewer active users. The principle, she notes, is especially more relevant today given how fast AI is being deployed.

The fix is not removing AI from customer support. It is redesigning the system to surface truth rather than hide it. Dhanuka calls this building for "deep listening," and she offers several concrete starting points.

Simplify categorization: Most support interfaces present either 20 menu items that nobody reads or no categorization at all, creating a mapping problem, because customers and companies rarely use the same language to describe the same issue. Dhanuka recommends fewer than five clear categories: qualitative feedback, refund request, compensation claim, and legal concern. "If you see a sudden drop in a certain category of complaints but you haven't done the work to improve in that category, then you have your answer." The structure also helps manage risk: if a refund claim looks unusual or falls outside normal parameters, having a path to route it directly to legal is a practical risk management step.

Separate the incentives: Sales, brand management, and post-sales service should not report to a single leader whose goal is operational efficiency. "If you put them all under one senior leader, you will not get the signals you need," Dhanuka warns. Independent reporting lines for returns data, brand sentiment, and sales performance create the objective feedback loops that catch problems early. The org structure recommendation flows from the core insight: fragmented ownership with accountability is what drives consistency, not consolidated control.

Be your own customer: The simplest diagnostic is also the most underused. Dhanuka recommends that executives and board members be willing to actually go through their own support experience, hold the line, navigate the IVR, wait for the email reply, and get the real signal. "Pretend to be your own customer. Ask yourself: did I enjoy holding that line? Would I want to deal with this brand again? If something does not feel right, it's probably not right." She also recommends segmenting by customer tenure and cohort, and paying specific attention to complaint signals from long-term customers, because even a drop there often means loyalty is being spent, not earned.

The broader risk extends beyond brand erosion. Dhanuka points to a growing wave of regulatory scrutiny and warns that liability lines between technology providers, implementers, and the companies deploying AI remain unclear. Compliance exposure is real across jurisdictions, and regulations will inevitably catch up.

"This is a good time for boards and C-level executives to think about what kind of targets they are setting," she says. "Just because they are in a race to adopt AI does not mean they should rush and inadvertently create conditions that will harm the company in the long run. Make it easy for the right people to reach you. Make it hard for the bad actors to rip you off. That's the right trade-off."