All articles

How Field Observation and Inclusive Design Are Shaping The Next Wave of Voice AI

Will Croushorn, a QSR product leader behind 600+ voice AI locations, details how store-floor insights and inclusive design can power the next generation of customer experience.

Not all issues surface in a dashboard. You have to be in the real world to understand where the friction truly lies.

The views and opinions expressed are those of Will Croushorn and do not represent the official policy or position of any organization.

As conversational AI moves into drive-thrus and storefronts, success comes down to how well these systems handle the reality of in-person interactions. Customers don’t speak in perfect prompts. They gesture, shorthand, and improvise, expecting to be understood without over-explaining. The opportunity lies in designing AI that can operate inside that messiness, where context is scattered, workarounds are routine, and meaning is often implied. Teams that build with those conditions in mind are the ones creating voice experiences that actually feel intuitive in the real world.

Will Croushorn is a Product Lead at a global Tier 1 QSR brand overseeing one of the world's largest generative AI deployments in physical retail, scaling an enterprise voice assistant across hundreds of locations which has handled over 45 million orders to date. His background ranges from leading SaaS innovation in logistics and recruiting to co-founding a school in northern Iraq, where he achieved fluency in Kurdish to build trust within a multilingual community. This cross-cultural, field-first sensibility directly informs his approach to building AI systems grounded in the nuance of real human behavior.

"Not all issues surface in a dashboard. You have to be in the real world to understand where the friction truly lies," says Croushorn. He believes that while fragmented data undermines AI across industries, a larger gap exists in teams designing voice agents remotely, isolated from the actual interactions between customers and employees. Spending time on-site can reveal the subtle patterns, improvised workarounds, and emotional pain points that no standalone dataset can capture.

The pickle puzzle: Enterprise data architectures often promise a unified view of the customer, yet most voice agents still operate with only a fraction of the context they need. When a customer asks for "the green thing" while customizing a burger, a human worker instantly scans the surrounding context and reaches for the pickles, while a model stalls. "Everything lives in silos, and that severely limits how these models are able to operate in the physical world." Closing that gap starts with unifying the data streams an agent can access so that ambient signals, like what is already in a customer's order, become part of the model's decision-making toolkit.

Paper beats program: Beneath every documented process, employees inevitably develop informal workarounds to keep operations running smoothly. Whether a crew member jots a note on a receipt to bypass a POS glitch or a grocery team memorizes a manual override, these unwritten routines remain invisible to language models trained strictly on official documentation. "If you don't account for the entire system, AI agents will fail the moment they hit a roadblock that the workaround usually solves," Croushorn notes.

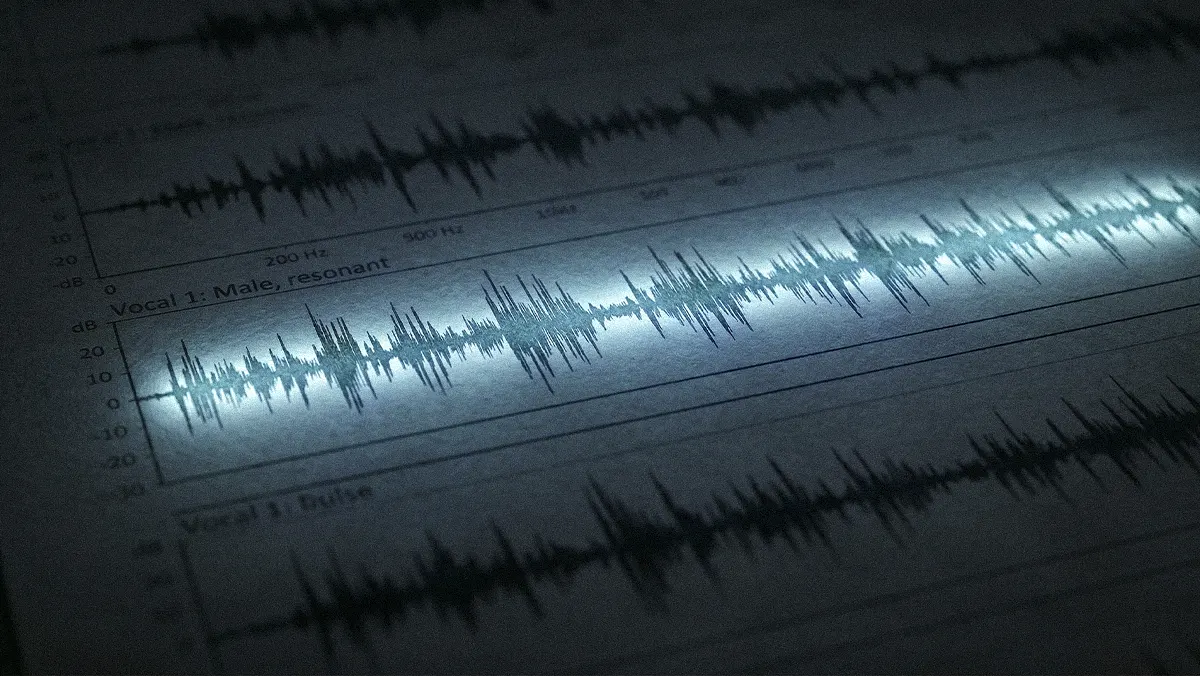

Whether it is a language barrier, an unaccommodated speech pattern, or a confusing interface, a rigid system can leave a customer feeling dismissed rather than assisted. Designing for these realities requires diverse teams, rigorous field research, and a willingness to sit with the discomfort of watching a product fall short in real-time. The industry is beginning to pivot toward this human-centric approach, prioritizing research into stutter-sensitive speech recognition and inclusive accessibility updates that extend the reach of voice-first technology.

The cucumber conundrum: Croushorn draws on his five years living in northern Iraq to illustrate why communication breakdowns carry real emotional weight. While running a business overseas, he once attempted to buy a single cucumber without speaking Kurdish and walked out with two full bags. "That experience has always stayed with me," he says. "How do we build this technology so that doesn't have to happen?" That question now anchors how he approaches voice AI for diverse customer populations, treating the elimination of helplessness, whether the barrier is linguistic, cognitive, or situational.

Seat at the table: Voice AI that works for one demographic often excludes others. Customers with Parkinson’s, stutters, or hearing impairments frequently encounter friction that standard models aren't built to handle. Croushorn’s team has made progress in accommodating these atypical speech patterns, enabling customers who were previously sidelined to successfully complete voice-driven orders. Scaling that level of inclusion requires representation from the start. "We need a diverse group of people at the table to inform what we’re building," Croushorn says. "Otherwise, we’re just building technology that excludes."

No AI model can realistically be trained on every unwritten rule or linguistic edge case, making a well-designed human handoff essential. Customers still prefer human support for nuanced problems, and organizations investing in human-led support hubs continue to treat skilled agents as a strategic asset.

AI-human loop: Some of the most effective AI deployments gather context and narrow intent before a customer ever realizes a human is being brought into the loop. Croushorn points to Amazon’s customer service chatbot as a benchmark. In a recent interaction, the system recognized his order, suggested likely issues, and offered a seamless opt-out. "It captured everything it needed before determining it couldn't help and escalating to a human," he says. "Even before announcing the handoff, it was already dialing, so the wait time was minimal."

Measuring the mea culpa: Once the core system is running reliably, identifying remaining friction requires looking beyond standard dashboards. Croushorn's team discovered that one of their most revealing signals was every time the AI agent said "sorry." Tracking that phrase across thousands of interactions exposed clusters of unresolved pain points that conventional metrics missed entirely. "Tracking every instance of an agent apologizing isn't standard practice, but it's an incredibly valuable indicator of where friction lives and where we need to focus," Croushorn explains.

Taking inspiration from how Walt Disney World reimagined its queues, Croushorn envisions a future where AI transforms historically frustrating chores, like waiting in a drive-thru line, into smooth, personalized experiences. “How can we use this technology to serve customers in ways we couldn’t before?” Croushorn concludes. “The goal is to turn a historically negative interaction into an experience people want to repeat, helping them do what they came to do in a way that wasn’t possible before.”